OmniMMI: A Comprehensive Multi-modal Interaction Benchmark in Streaming Video Contexts

Abstract

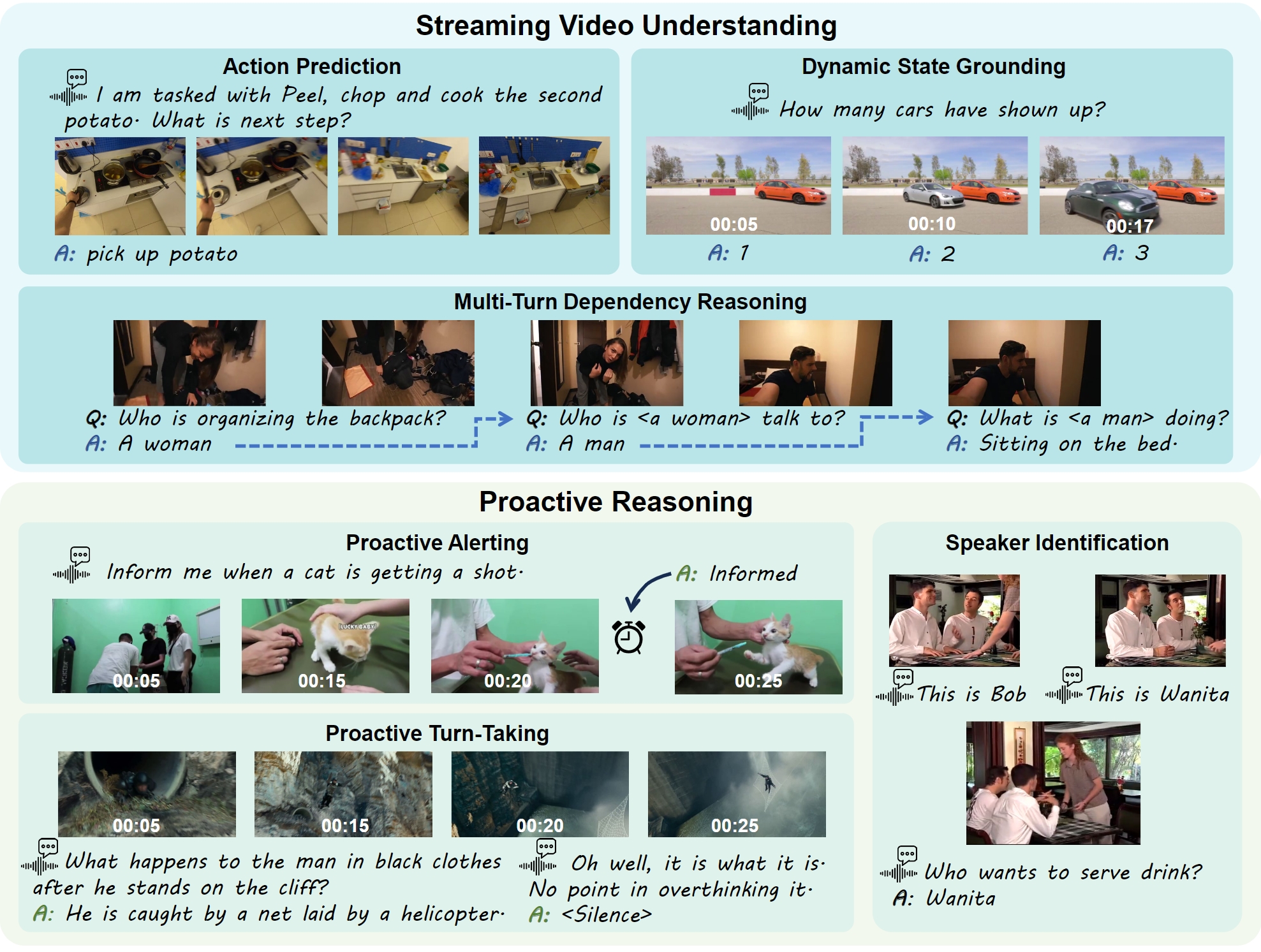

OmniMMI is a cutting-edge benchmark for multi-modal interaction, specifically designed for OmniLLMs in streaming video environments. It includes 1,121 videos and 2,290 questions, tackling the challenges of streaming video understanding and proactive reasoning across six unique subtasks. We introduce Multimodal Multiplexing Modeling (M4), a framework that enhances real-time interactive reasoning with minimal fine-tuning on pre-trained MLLMs.

✨ Highlights:

-

OmniMMI: A Comprehensive Multi-modal Interaction Benchmark

-

Streaming Temporal State Awareness: This requires understanding current and historical video states without future context, challenging tasks like action prediction, state grounding, and multi-turn dependencies.

-

Proactive Reasoning and Turn-Taking: Models must generate responses proactively, identifying speakers, distinguishing noise from queries, and initiating responses appropriately.

-

-

Multimodal Multiplexing Modeling (M4)

-

M4-IT Dataset: A synthetic instruction finetuning dataset with components interleaved image-text instruction, noise instruction, and stop instruction.

-

M4 Model: Enhances proactive response generation, assesses new queries against noise, by enabling parallel decoding.

-

If you're interested in the M4 model, check the introduction here.

Leaderboard of Large Video Language Model on OmniMMI

Submit Your Results: wangyuxuan1@bigai.ai

The leaderboard is ranked by the average score without proactive tasks (avg. w/o P), reflecting the average score across four tasks: SG, AP, MD, and SI. You can click on the task bar to view the rank for a specific subtask.

| Rank | Models | LLM | Num Frames | SG 1st | SG 2nd | SG 3rd | SG avg. | AP | MD 1st | MD 2nd | MD 3rd | MD avg. | SI | PA | PT | avg. w/o P |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | Gemini-1.5-Pro | - | 128 | 52.33 | 19.67 | 9.35 | 16.33 | 43.00 | 35.00 | 16.26 | 7.14 | 12.00 | 38.50 | ✗ | ✗ | 27.46 |

| 2 | GPT-4o | - | 50 | 48.67 | 16.95 | 5.61 | 15.00 | 39.50 | 34.33 | 15.57 | 7.65 | 12.33 | 17.00 | ✗ | ✗ | 20.96 |

| 3 | IXC2.5-OL | Qwen2-1.5B | 512 | 40.33 | 5.08 | 0.00 | 4.03 | 30.50 | 26.00 | 4.50 | 1.52 | 4.00 | 23.00 | ✗ | 14.50 | 15.04 |

| 4 | LongVILA | Llama3-8B | 128 | 39.00 | 4.41 | 0.93 | 4.33 | 39.50 | 39.00 | 4.41 | 0.93 | 3.00 | 10.00 | ✗ | ✗ | 14.21 |

| 5 | M4 | Qwen2-7B | 32 / 1 fps | 35.67 | 6.44 | 1.87 | 5.67 | 33.50 | 35.67 | 6.44 | 1.87 | 1.67 | 9.00 | 25.50 | 62.00 | 12.46 |

| 6 | LongVA | Qwen2-7B | 32 | 33.33 | 4.07 | 0.00 | 3.33 | 37.50 | 33.33 | 4.07 | 0.00 | 2.33 | 3.00 | ✗ | ✗ | 11.54 |

| 7 | LongLLaVA | Jamba-9B | 128 | 36.33 | 3.73 | 0.00 | 3.33 | 29.00 | 36.33 | 3.73 | 0.00 | 3.67 | 10.00 | ✗ | ✗ | 11.50 |

| 8 | LLaMA-VID-13B | Vicuna-13B | 128 | 33.33 | 2.03 | 0.00 | 1.33 | 30.50 | 22.67 | 3.46 | 0.51 | 3.33 | 8.50 | ✗ | ✗ | 10.91 |

| 9 | VideoChatGPT | LLaMA-7B | 100 | 35.33 | 4.7 | 1.87 | 3.33 | 33.50 | 18.00 | 3.11 | 0.51 | 3.00 | 3.50 | ✗ | ✗ | 10.83 |

| 10 | LLaMA-VID | Vicuna-7B | 128 | 29.67 | 2.38 | 0.00 | 2.33 | 29.00 | 21.33 | 3.80 | 0.51 | 2.67 | 7.50 | ✗ | ✗ | 10.38 |

| 11 | VideoLLM-online | Llama3-8B | 1 fps | 18.00 | 4.75 | 0.00 | 4.67 | 35.00 | 18.00 | 4.75 | 0.00 | 1.33 | 0.00 | ✗ | ✗ | 10.25 |

| 12 | PLLaVA-34B | Yi-34B | 16 | 29.00 | 4.07 | 0.00 | 3.67 | 28.50 | 18.67 | 4.50 | 0.00 | 3.00 | 5.00 | ✗ | ✗ | 10.04 |

| 13 | PLLaVA-13B | Vicuna-13B | 16 | 41.33 | 3.39 | 0.00 | 2.67 | 25.00 | 25.67 | 5.54 | 2.04 | 4.33 | 6.50 | ✗ | ✗ | 9.62 |

| 14 | LLaVA-NeXT-Video-34B | Yi-34B | 32 | 30.33 | 2.71 | 0.00 | 2.67 | 32.50 | 14.67 | 2.08 | 0.51 | 1.67 | 1.50 | ✗ | ✗ | 9.59 |

| 15 | VideoLLaMB | Vicuna-7B | 32 / 1 fps | 32.67 | 2.71 | 0.00 | 2.33 | 29.50 | 32.67 | 2.71 | 0.00 | 3.00 | 3.00 | ✗ | ✗ | 9.46 |

| 16 | PLLaVA | Vicuna-7B | 16 | 37.33 | 3.73 | 0.93 | 3.33 | 30.00 | 21.00 | 3.46 | 0.00 | 1.33 | 3.00 | ✗ | ✗ | 9.41 |

| 17 | ShareGPT4Video | Llama3-8B | 16 | 34.00 | 2.03 | 0.93 | 2.00 | 29.00 | 20.33 | 3.46 | 0.00 | 2.00 | 4.50 | ✗ | ✗ | 9.38 |

| 18 | LLaVA-NeXT-Video | Vicuna-7B | 32 | 30.33 | 2.37 | 0.93 | 3.00 | 30.50 | 17.00 | 2.08 | 0.51 | 2.00 | 1.50 | ✗ | ✗ | 9.25 |

| 19 | Video-LLaVA | Vicuna-7B | 8 | 32.00 | 1.69 | 0.00 | 1.67 | 28.00 | 22.67 | 5.19 | 1.02 | 3.33 | 2.50 | ✗ | ✗ | 8.88 |

| 20 | VideoChat2 | Vicuna-7B | 8 | 19.67 | 2.37 | 0.93 | 2.33 | 27.50 | 16.33 | 3.81 | 0.51 | 2.67 | 1.00 | ✗ | ✗ | 8.38 |

| 21 | MiniGPT4-Video | Mistral-7B | 45 | 25.00 | 4.75 | 1.87 | 4.00 | 23.00 | 12.67 | 2.08 | 0.51 | 1.67 | 3.00 | ✗ | ✗ | 7.92 |

Table 1. Performance comparison of existing VideoLLM on OmniMMI. The 1st, 2nd, 3rd of SG and MD tasks represent the cumulative accuracy up to and including these stages. The 'avg.' indicates average accuracy across all data points. 'w/o P.' indicates the overall score across all subtasks except for the proactive task

Task type: SG: Dynamic State Grounding, AP: Action Prediction, MD: Multi-turn Dependency Resasoning, SI: Speaker Identification, PA: Proactive Alerting, PT: Proactive Turn-taking

Leaderboard of Large Omni Language Model on OmniMMI

Submit Your Results: wangyuxuan1@bigai.ai

The leaderboard is ranked by the average score without proactive tasks (avg. w/o P), reflecting the average score across four tasks: SG, AP, MD, and SI. You can click on the task bar to view the rank for a specific subtask.

| Rank | Models | LLM | Num Frames | SG 1st | SG 2nd | SG 3rd | SG avg. | AP | MD 1st | MD 2nd | MD 3rd | MD avg. | SI | PA | PT | avg. w/o P |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | VideoLLaMA2 | Qwen2-7B | 8 | 41.00 | 12.88 | 0.00 | 10.33 | 35.00 | 23.33 | 4.15 | 0.51 | 3.00 | 5.00 | ✗ | ✗ | 13.33 |

| 2 | VITA | Mistrl-8×7B | 16 | 8.67 | 0.00 | 0.00 | 0.00 | 39.00 | 11.33 | 3.11 | 1.52 | 2.00 | 1.50 | ✗ | 67.00 | 10.62 |

| 3 | M4-audio | Qwen2-7B | 32 / 1 fps | 28.33 | 2.37 | 0.00 | 2.00 | 13.00 | 19.33 | 3.11 | 0.51 | 3.00 | 7.50 | 1.50 | 68.50 | 6.38 |

| 4 | MiniOmini2 | Qwen2-0.5B | 1 | 17.00 | 5.08 | 0.93 | 4.67 | 14.00 | 6.00 | 1.00 | 0.00 | 1.00 | 1.00 | ✗ | ✗ | 5.17 |

Table 2. Performance comparison of existing OmniLLM on OmniMMI. The 1st, 2nd, 3rd of SG and MD tasks represent the cumulative accuracy up to and including these stages. The 'avg.' indicates average accuracy across all data points. The 'w/o P.' indicates the overall score across all subtasks except for the proactive task.

Task type: SG: Dynamic State Grounding, AP: Action Prediction, MD: Multi-turn Dependency Resasoning, SI: Speaker Identification, PA: Proactive Alerting, PT: Proactive Turn-taking

Data Samples

We provide a selection of short videos to reduce latency. If you're interested in exploring more, please refer to our complete dataset.

Task: Action Prediction (AP)

I have the duty to Prepare the cooking pot and bowls. The video showcases the task's progress, what do you suggest as my next move?

pour water in cooking pot

Task: Dynamic State Grounding (SG)

What is the current step in the rice cooking process?

1s-2s: Taking out rice

3s-4s: Soaking and washing rice

5s-7s: Putting rice into rice cooker

Task: Multi-turn Dependency Reasoning (MD)

0s-1s: What is shown in the beginning of the video?

0s-1s: A photo of a man and a woman smiling

1s-4s: What does the background voice talk about after the scene showing A photo of a man and a woman smiling?

1s-4s: Law enforcement is in place

1s-4s: What does the video show while the background voice mentions Law enforcement is in place?

1s-4s: Three men driving a ship on the sea

Task: Speaker Identification (SI)

Who welcomed someone to Top Notch?

Marie

Task: Proactive Alerting (PA)

Notify me when the man in a gray top is walking around a garden in the video.

[6s-14s]

Task: Proactive Turn-taking (PT)

Where did I put the book?

On the bookshelf

Where was the ATM located?

Near the window

I guess we'll see how it goes, but I'm not too worried about it at the moment.?

[SILENCE]

Citation

@misc{omnimmi,

title={OmniMMI: A Comprehensive Multi-modal Interaction Benchmark in Streaming Video Contexts},

author={Wang, Yuxuan and Wang, Yueqian and Chen, Bo and Wu, Tong and Zhao, Dongyan and Zheng, Zilong},

publisher={CVPR},

year={2025}

}